Introduction

Raytracing is modern computer graphics technique used to render life-like images for videos and animations. While it’s a relatively modern technique–coinciding with the birth of digital modeling–the inspiration for the methods can be traced back to ancient Greece. While some Greeks truly believed that our eye’s emit the ability to see and not that our eyes collect the light, Ray Tracers actually work on that very principle. Starting from the camera or ‘eye’, the ray tracer sends out ‘light’ rays and monitors their interactions with the objects in the scene. At the end of the trace, an image is compiled based on the final state of all the rays.

Findings

Moller and Trumbore describe a simple and elegant method to determine whether a particular ray in R3 will intersect with a given triangle with vertices <V1, V2, V3>. Through a simple transformation of the coordinate system, the location of the intersection and distance between the triangle and ray’s origin can be quickly computed with minimal memory overhead and no precompilation of plane equations.

The basis of the method described by Moller and Trumbore is quite straightforward. First consider the ray as a parametrized line of the form:

This should be familiar to anyone from algebra 1 in high school, but the real magic comes from considering the equation for a triangle. A parameterized equation for a triangle looks like ![]()

where u, v > 0 and u+v ≤ 1. I had never seen this treatment before; but after taking a good look at it, it makes sense and perhaps even obvious in hindsight. From here we need to relocate our origin through simple vector arithmetic (i.e. a coordinate mapping of (V1, V2, V3)∈R3o → (E1, E2, O)∈R3V3.).

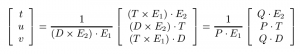

The only tricky part is the change of basis mapping from <X, Y, Z> → < t, u, v> which can be found using cramer’s rule[ref]Cramer’s Rule is an explicit solution of a system of linear equations where the number of unknowns matches the number of equations. Checkout the wikipedia article for the details.[/ref].

And while this may not add anything as far as the math is concerned, I liked this representation of the process.

Like I said above, once the mapping is complete, a simple analysis of the resulting <t, u, v> vector will fully describe the interaction between the triangle and the ray. Coloration can be readily handled based on the u and v factors, opacity might vary with t, and countless interesting ideas can be implemented.

Importance

While I will not dwell too long on the applications of this technique and its merits, here is a chart directly from the paper (so take it as such):  While not a significant improvement in computational time, they did report a substantial improvement in memory usage.

While not a significant improvement in computational time, they did report a substantial improvement in memory usage.

Discussion

Beyond the simplicity of the theory and implementation, the benefit of this technique over others lies in the reduced memory overhead when dealing with mesh surfaces. The authors of the paper estimate this savings to range from 25% to 50% in some cases, and memory management can certainly be a central hurdle for many programmers in a range of fields. Nevertheless, this technique does not necessarily scale well for applications where computational time is important, such as real-time rendering, since virtually all factors must be recalculated with a small change in any one of them. While the transformation matrix can be stored in memory and therefore offer quicker response time in some situations, the matrix coefficients are highly and non-linearly impacted by changes in ray position. This is, of course, the trade-off between memory intensive methods and computationally intensive methods in general, but the authors offer no remark in this direction.

Since the paper is rather dated (1998), I wonder what the state of the art is these days in terms of base algorithms (and not necessarily all the architecture specific enhancements and speed-ups)?

The Paper

Moller, T. & Trumbore, B. Fast, minimum storage ray-triangle intersection. Doktorsavhandlingar vid Chalmers Tekniska Hogskola 109–115 (1998). doi:10.1080/10867651.1997.10487468