While my interests are, admittedly, quite broad, I’ve always been fascinated with signal processing and remote sensing. Just imagine the technological marvel that we can accurately measure the amount of chlorophyll, a grove of marijuana or the amount of snow from a satellite whose travelling 1,000s of miles per hour around our planet. If that’s not magic then I don’t know what is!

So given this interest of mine, it’s no surprise that I’ve really gotten into the latest developing in a tracer-release study I’m involved in. Without going into too much detail, this study will be looking at the diffusive processes within Apalachicola Bay in a hope to better understand how plankton patches form and degrade through time. To do this, we’ll be releasing drifters and dye into the water as specific points, and then watch and record as the dye spreads across and downward into the water column. Overall, we hope that it will be a nice, simple experiment.

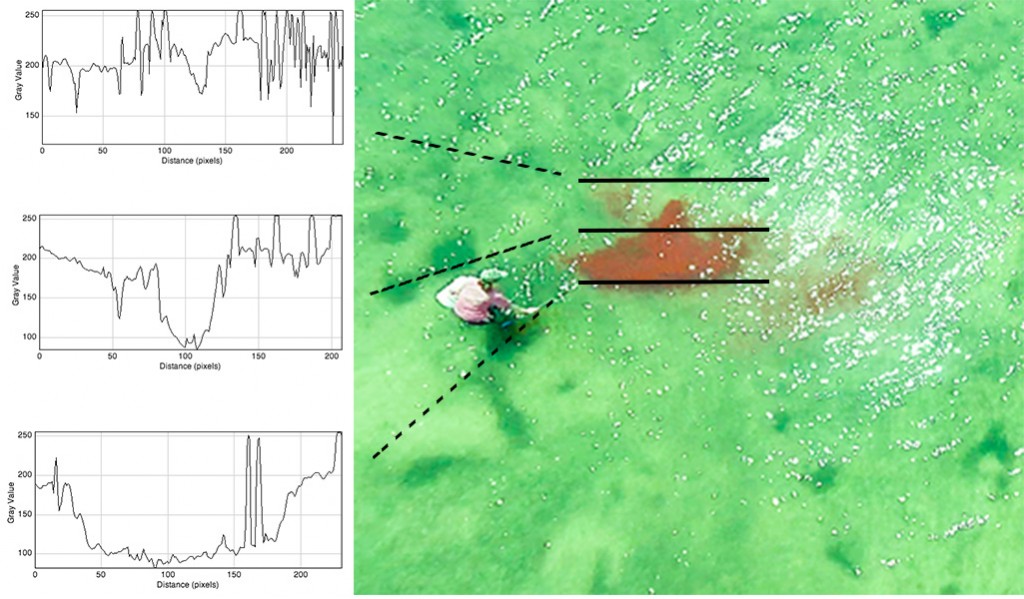

And now to get to the part about remote sensing. One of the tools at our disposal is a aerial drone from which we can record photos and videos along with altitude and GPS location. The drone should be able to provide an ideal vantage point to watch and record the changes in the dye patch over time, at least in a horizontal direction. It is my hope to find a practical way to quantify (at least roughly) the patch as it changes. Here is a low resolution sample image of a dye patch taken with our drone.

While the dye is clearly visible and certainly stands out from the surrounding water, the glare makes it a bit tricky to be sure where the dye patch ends on the righthand side. In addition, there is a lot of muddy pinkish regions where the cutoff between dye and not-dye become difficult.

False color

The first thing I wanted to do was to switch the colors around a bit so I could get a better feeling for the sorts of structures and shapes I should be looking for in the image. To do this I decided to change the hue of the image which will change the perceived contrast of the dye patch (yet notably, it doesn’t change the physical contrast of the patch).

Here we can definitely make out the main body of the dye along with a couple sub-patches. I also noticed that most of the diffused area in the center of the image is not as dye-filled as I had originally though–as least from the signal that we have here. Moving forward I would like to improve our resolution in this diffused region in particular.

As a proof of concept we can actually already quantify the main body of the patch in a nice, robust sort of way. Below you’ll see a sample of what I call ‘virtual transects’. By plotting the intensities of the magenta region of the color spectrum against the position we can make these plots of relative dye concentration[1]. The data from these plots could be used to quantify the total amount of dye, the spreading rate or even to construct a 2D mesh of the dye extent.

In the plots we can clearly see that the dye patch has values of ~100 while the background is ~200 so there is still a lot of wiggle room. Let’s see if we can improve the contrast between the dye and the background a bit more.

Channels

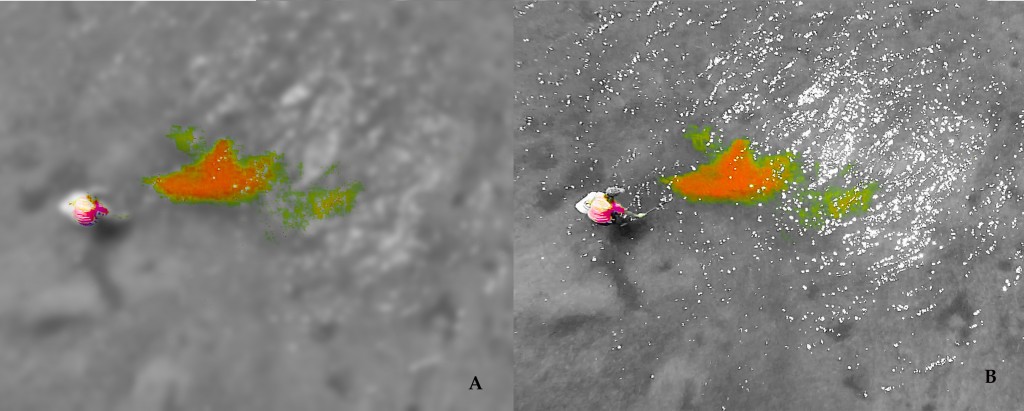

By preferentially changing the saturation and contrast of the image, the patch’s internal structure can be discerned. We can also start making out more of that diffused region now too.

While this does look a lot better (especially if you view the full sized image), we are still plagued by that background glare and the noise that the water’s surface causes.

Since we are interested in features that are about the size of the patch, we can actually filter out most of the signals that are smaller or larger than the scales we are interested in by using a FFT.

When using a FFT, we actually convert the image into frequency domain and then apply a filter before converting the image back into real space. While I could write an entire article on just this aspect, instead I will passover it and merely direct any interested readers to this article by Paul Bourke.

When applying the FFT filter I decided to say that I wanted everything smaller than 10 pixels or larger than 200 pixels should be removed. I figured that anything that would be measurable in the patch would fall within this scale since the really small stuff is less important than the medium-scale aspects. Also, the whole point of applying this filter was to remove the high frequency noise and glare.

The filter worked perfectly and removes virtually all of the glare from the image. While any information about the patch that is behind the glare is technically not there, the filter effectively interpolates from around the pixels to replace the glare.

From this final image (A), I can reliably measure area and color of the patch which goes a long way to quantifying the dye patch. I still have some work ahead in this area, but now that we have good, strong signals it seems quite feasible to move forward.

Notes

- It is important to remember the difference between relative and absolute concentration here. Just about any remote sensing technique provides a relative measurement rather than an actual or absolute measurement.